Last year, I did deploy a Kubernetes cluster using Kubepsray – after giving a try to OpenShift 4.

I did deploy a 1.18.3, which is reaching EOL, and am now looking into upgrading it.

The Kubespray documentation is pretty straight forward: iterate over their releases, one after the other, re-applying the upgrade playbook, and eventually

forcing the Kubernetes version.

$ cd /path/to/kubespray

$ git status

On branch master

[...]

$ git pull

[...]

$ git tag

[...]

v2.13.2

v2.13.3

v2.13.4

v2.14.0

v2.14.1

v2.14.2

v2.15.0

v2.15.1

[...]

My first issue being that I did not use a Kubepsray release to deploy my cluster: I just cloned their repository and went with their last master – worked perfectly fine, which is a testament to the stability of their code.

Looking at existing tags in their repository, I tried to figure out was the closest to the one I’ve been using deploying that cluster. My Kubernetes version 1.18.3 being somewhere in between Kubespray v1.13 and v1.14.0.

$ git diff 6bc60e021e39b049ec7135bd4cfb4adfce44d1f7..v2.13.3

[...]

$ git diff 6bc60e021e39b049ec7135bd4cfb4adfce44d1f7..v2.13.4

[...]

$ git diff 6bc60e021e39b049ec7135bd4cfb4adfce44d1f7..v2.14.0

[...]

$ git diff 6bc60e021e39b049ec7135bd4cfb4adfce44d1f7..v2.14.1

[...]

I decided to start from v1.14.0. Their default Kubernetes version being 1.18.8.

Kubepsray upgrades should be applied one after the other.

According to their doc, one shouldn’t skip any tag – though checking their diffs, it looks like we may skip patch releases.

First, we would check the changes in Kubepsray sample inventory, figuring out which variables needs to be added, removed or changed, from our cluster inventory:

$ git diff 6bc60e021e39b049ec7135bd4cfb4adfce44d1f7..v2.14.0 inventory/sample

[...]

$ vi inventory/mycluster/group_vars/all/all.yaml

$ vi inventory/mycluster/group_vars/k8s-cluster/addons.yaml

$ vi inventory/mycluster/group_vars/k8s-cluster/k8s-cluster.yaml

Chosing the target Kubernetes version, make sure that it is handled by Kubespray.

Check for the kubelet_checksums and crictl_versions arrays in roles/downloads/defaults/main.yaml.

When in doubt, stick with the one configured in their sample inventory.

Once our inventory is ready, we could take some time to make sure our cluster is in an healthy state.

If there’s any deployment that can be shut down, replicas count that can be lowered, … any workload that can be temporarily removed would speed up your upgrade time.

We could also check disk usages, clean it all up, there’s no troubles updating repositories, pulling images, …

In my case, I would also check my Ceph cluster, serving persistent volumes for Kubernetes, ensure that all services are up, that there’s no risk some volume could be stuck at some point, waiting for an I/O or something, …

Eventually, we may start applying our upgrade:

$ ansible-playbook -i ./inventory/mycluster/hosts.yaml ./upgrade-cluster.yml \

| tee -a upgrade-$(date +%s)-from-1.18.3-to-1.18.8.log

We would see Kubepsray would start checking cluster variables, eventually pre-pulling and caching assets and container images on our nodes.

Once those checks are done, it would start by upgrading the etcd cluster – all at once, though this is done pretty quickly, the Kubernetes API did not seem to suffer from it.

Next, it would upgrade Kubernetes API services on the first master node. Setting it unschedulable if it was not already, draining it, upgrading Kubelet, the container runtime, making sure proper kernel modules are loaded, … Starting the new API, scheduler and controler Pods.

After upgrading the first master, parts of Kubepsray apps are redeployed, installing the last CSI & RBAC configurations. Then, the other masters are upgraded, one after the other.

Once all masters are up-to-date, Kubespray would upgrade the cluster SDN – in my case, Calico.

This goes pretty fast, the playbook applies changes on two nodes at once, I didn’t have much time to check for side effects – all in all, I didn’t see my apps suffer at that stage.

We’re now done with the most critical parts, and left with those that will affect availability for our hosted applications.

Kubespray would then go, one node after the other: cordon, drain, update runtime, restart services, uncordon.

The draining part can take a long time, depending on your nodes sizes and overall usage.

Every 10.0s: kubectl get nodes

NAME STATUS ROLES AGE VERSION

compute1 Ready worker 314d v1.18.8

compute2 Ready worker 314d v1.18.8

compute3 Ready worker 314d v1.18.8

compute4 Ready worker 314d v1.18.8

infra1 Ready infra 314d v1.18.9

infra2 Ready infra 314d v1.18.9

infra3 Ready,SchedulingDisabled infra 314d v1.18.8

master1 Ready master 314d v1.18.9

master2 Ready master 139d v1.18.9

master3 Ready master 314d v1.18.9

We could see failures – I did have the upgrade playbook crash once, due to one node drain step timing out, which led me to find a PodDisruptionBudget, preventing one Pod from being re-scheduled (KubeVirt). In such case, we may fix the issue, then re-apply the upgrade playbook – which would be a bit faster, though would still go through all steps that already completed.

To avoid these, I then connected on each node during its drain phase, made sure there were no Pod stuck in a Terminating state, or others left Running while the drain operation should be shutting them down.

Also note that re-applying the upgrade playbook, we could speed things up on nodes that were already processed by setting them unschedulable – in which case, the drain is skipped, container runtime and kubelet would still be restarted, with little to no effect on those workloads.

Once all nodes would be up-to-date, Kubespray would go through its Apps once again, applying the last metrics server, ingress controller, registry, depending on which ones you’ve enabled – in my case, I disabled most of those from my inventory prior upgrading, to skip those steps.

In about three hours, I was done with my first upgrade (10x 16G nodes cluster, overloaded). I could start over, from the next tag:

$ git checkout v2.14.1

$ git diff v2.14.0..v2.14.1 inventory/sample

$ vi inventory/mycluster/xxx

$ ansible-playbook -i ./inventory/mycluster/hosts.yaml ./upgrade-cluster.yml \

| tee -a upgrade-$(date +%s)-from-1.18.8-to-1.18.9.log

This one went faster, a little under 2 hours. No error.

And the next ones:

$ git checkout v2.14.2

$ git diff v2.14.1..v2.14.2 inventory/sample

$ vi inventory/mycluster/xxx

$ ansible-playbook -i ./inventory/mycluster/hosts.yaml ./upgrade-cluster.yml \

| tee -a upgrade-$(date +%s)-from-1.18.9-to-1.18.10.log

$ git checkout v2.15.0

$ git diff v2.14.2..v2.15.0 inventory/sample

$ vi inventory/mycluster/xxx

$ ansible-playbook -i ./inventory/mycluster/hosts.yaml ./upgrade-cluster.yml \

| tee -a upgrade-$(date +%s)-from-1.18.10-to-1.19.7.log

$ git checkout v2.15.1

$ git diff v2.15.0..v2.15.1 inventory/sample

$ vi inventory/mycluster/xxx

$ ansible-playbook -i ./inventory/mycluster/hosts.yaml ./upgrade-cluster.yml \

| tee -a upgrade-$(date +%s)-from-1.19.7-to-1.19.9.log

Having reached the last Kubespray release, we may finish with upgrading and rebooting our nodes. One after the other:

$ kubectl cordon node1

$ kubectl drain --delete-emptydir-data --ignore-daemonsets node1

$ ssh root@node1

# apt-get upgrade

# apt-get dist-upgrade

# reboot

$ kubectl uncordon node1

In my case, having disabled most Kubespray applications, I would also make sure the last Ingress Controller, Registry, RBD & CephFS Provisioner are up to date

$ vi roles/downloads/defaults/main.yaml

$ find roles/kubernetes-apps/ingress_controller/ingress_nginx/ -name '*.j2'

$ vi roles/kubernetes-apps/ingress_controller/ingress_nginx/templates/ds-ingress-nginx-controller.yml.j2

$ kubectl edit -n ingress-nginx ds/ingress-nginx

[...]

In the end, upgrading that cluster from 1.18.3 to 1.19.9 took me about 10 hours. Though I suspect I could have went straight to 1.19.9, and Kubespray v2.15.1. Being my first time with those playbooks, I would rather take my time and repeat until I’m confident enough with it.

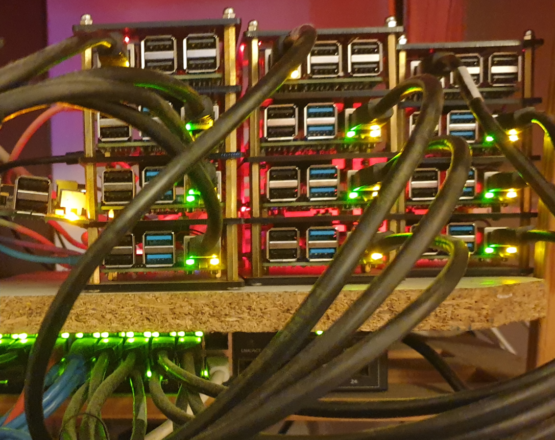

And while it was not on my mind in the first place, I also took a couple hours to upgrade an other cluster of mine, 11 Raspberry Pi nodes I deployed in January, from 1.19.3 to 1.19.9.

Having played with Kubepsray for about a year, I was pretty confident following their docs and releases wouldn’t be an issue. Still, it’s a relief having gone through those upgrades.

Otherwise working with OpenShift 4, it’s kind of amazing to see a Kubernetes cluster upgrading without all the outages you would see with OpenShift: etcd operator and cluster upgrading, the Kubernetes API, the OpenShift API, the SDN, CSI, the OAuth operator, … nodes drain and reboot.

Kubespray upgrades are way smoother, you decide when to upgrade applications and operators. OpenShift 3 and openshift-ansible simplicity, without its unreliability.